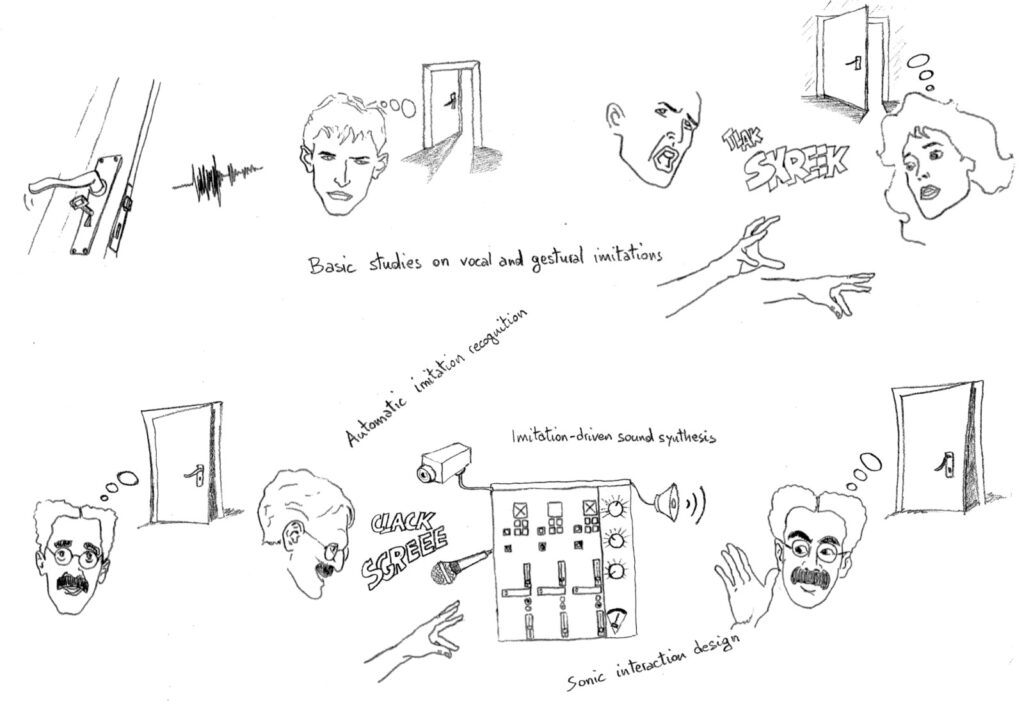

Sketching is at the root of any design activity. In visual design, hand and pencil are still the primary tools used to produce a large variety of initial concepts in a very short time. However, in product and media design the sonic behavior of objects is also of primary importance, as sounds may afford seamless and aesthetically pleasing interactions. But how might one sketch the auditory aspects and sonic behavior of objects, in the early stages of the design process? Non-verbal sounds, more than speech, are naturally and spontaneously used in everyday life to describe and imitate sonic events, often accompanied by manual expressive gestures that complement, qualify, or emphasize them. The SkAT-VG project aims at enabling designers to use their voice and hands, directly, to sketch the auditory aspects of an object, thereby making it easier to exploit the functional and aesthetic possibilities of sound. The core of this framework is a system able to interpret users’ intentions through gestures and vocalizations, to select appropriate sound synthesis modules, and to enable iterative refinement and sharing, as it is commonly done with drawn sketches in the early stages of the design process. To reach its goal, the SkAT-VG project is based on an original mixture of complementary expertise: voice production, gesture analysis, cognitive psychology, machine learning, interaction design, and audio application development. The project tasks include case studies of how people naturally use vocalizations and gestures to communicate sounds, evaluation of current practices of sound designers, basic studies of sound identification through vocalizations and gestural production, gesture analysis and machine learning, and development of the sketching tools.